ONE Troubleshooting Guide

The following guide is intended to provide support in response to the most common causes of errors you might face while working with ONE.

| If you do not find a solution to your issue, contact the Ataccama Support team. |

This guide is intended for advanced users, that is, those with access to Ataccama ONE Desktop and the application configuration in the ONE web application (Global Settings tab).

Profiling

Job stays in Submitted status after a long period of inactivity

- Problem

-

After ONE has been idle for a long time, for example, over 12h, the first subsequent job does not progress past the

Submittedstate. Any following jobs are performed without issues. - Possible cause

-

There is an issue with how the logging context is cleared after a subscription attempt fails.

- Possible solution

-

There are two options depending on whether you need to use auditing in Data Processing Module (DPM) or not:

-

Auditing can be disabled.

If auditing is already enabled in DPM, you can turn it off by setting the property

ataccama.audit.enabled=falsein DPM configuration.For more information, see DPM Configuration, section Audit configuration.

-

Auditing cannot be disabled.

If you need to continue using auditing, set the property

ataccama.authentication.internal.jwt.generator.streamingTokenExpirationin DPM configuration to a value that exceeds the expected idle period, for example,18h.The property accepts the following units: ns(nanoseconds),us(microseconds),ms(milliseconds),s(seconds),m(minutes),h(hours),d(days).Keep in mind that extending the validity of the JWT token can potentially lead to security issues as the subscription can remain active even after the token has been revoked.

-

Data processing

DPE takes a long time to start processing

- Problem

-

Compared to earlier versions, DPE might spend more time in the

STARTINGstatus before it begins processing data. - Solution

-

This behavior is expected. Starting from 13.9.0, queries used for reading data (such as get the row count or get the last partition) are executed in DPE instead of DPM. As a result, the DPM throughput was significantly improved although DPE might initially appear slower in comparison.

DQ evaluation failing

If DQ evaluation is failing, first check that one set of credentials on the connection are configured as default.

Post-processing plan failing

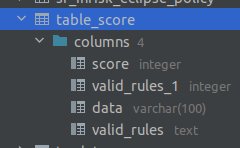

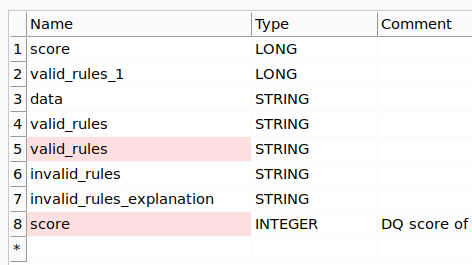

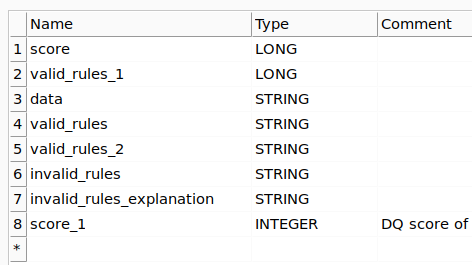

When database column names are duplicates of the system auxiliary column names, post-processing fails. This can be resolved by adding a suffix,` _1` for example, to the post-processing auxiliary columns in ONE Desktop.

Runtime job takes too long to process

- Problem

-

Runtime job takes too long to process.

- Possible solution

-

Enable performance profiling in the Global Runtime Configuration. This way you can view the individual runtime steps to identify performance issues that might have occurred during execution.

To enable profiling, add the following configuration in the Runtime Configuration section of the DPM Admin Console. For more information, see dmp-admin-console:dpm-and-dpe-configuration.adoc, section Runtime configuration.

The file names are arbitrary, however, we recommend keeping them reasonably short and avoiding any special characters and spaces. Example<?xml version='1.0' encoding='UTF-8'?> <runtimeConfiguration> <runtimeComponents> <runtimeComponent samplingPeriod="20" class="com.ataccama.dqc.processor.monitoring.sampling.SamplingComponent"> <writers> <writer filename="dqc_sampling_output.txt" stdout="false" detailed="true" class="com.ataccama.dqc.processor.monitoring.sampling.writer.SamplingTextWriter"/> <writer filename="dqc_sampling_output.dot" stdout="false" class="com.ataccama.dqc.processor.monitoring.sampling.writer.SamplingDotWriter"/> </writers> </runtimeComponent> </runtimeComponents> </runtimeConfiguration>

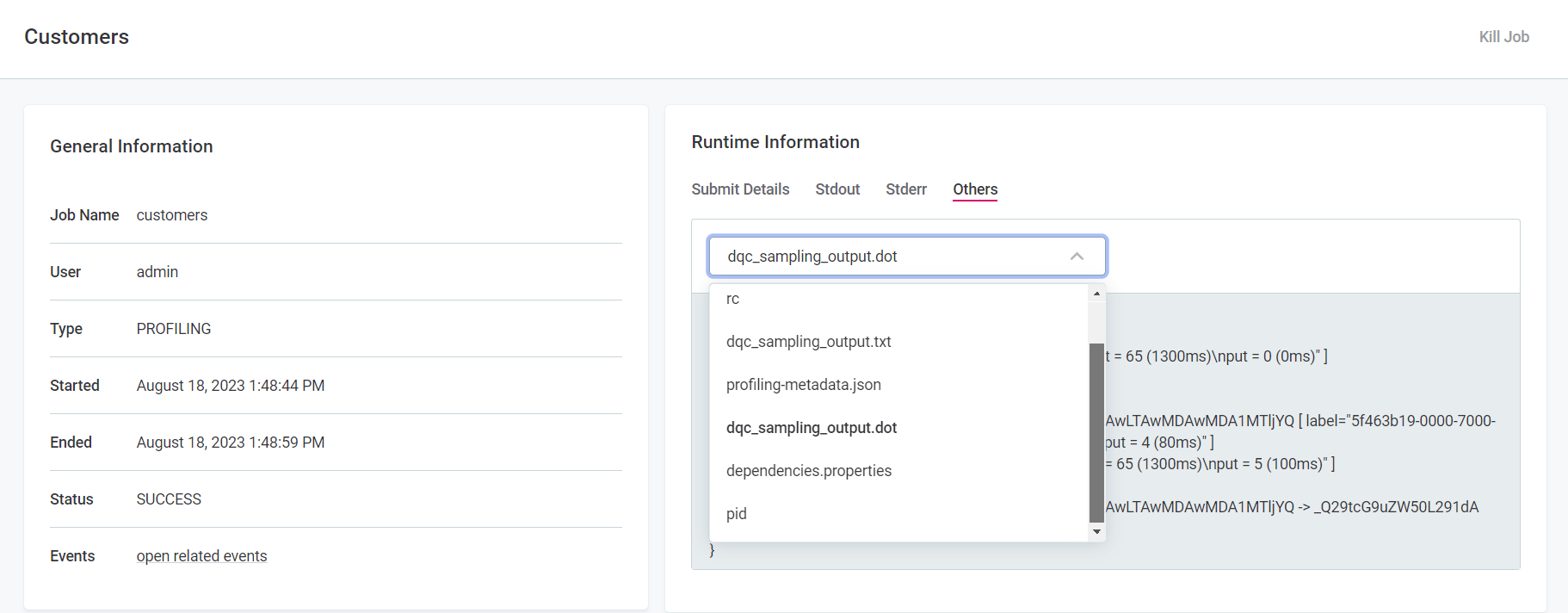

After performance profiling has been enabled, run the job again in ONE. Next, in DPM Admin Console, go to Jobs, select the job name and then switch to the Others tab.

There are two files listed, one a .txt file with the raw text, the other a .dot file with the Graphviz source code.

You can use either of the two files to help identify possible performance issues, however the .dot file is better suited for more complex performance issues.

|

We recommend using a graph renderer to visualize the graph defined in the source code of the

|

User synchronization

Imported user information is not correctly synchronized

- Problem

-

Users that have been imported from Keycloak are not correctly updated in ONE. The same issue can occur in earlier releases, but as it causes the application to fail, the same solution cannot be applied.

By default, users are synchronized by their Keycloak identifier. If you are migrating users from one Keycloak instance to another, the identifiers are generated again.

This is why it is necessary to define how users are merged before they are imported. The following options are available:

-

PERSON_UNIQUE_USERNAME: Users are merged if their usernames match. -

PERSON_UNIQUE_EMAIL: Users are merged if their emails match. -

PERSON_UNIQUE_USER_ID: Users are merged if their Keycloak identifiers match.

For more information about how to configure this option, see MMM Configuration, section User provider plugin configuration.

-

- Possible cause

-

To determine what caused the synchronization issue, start by checking the Metadata Management Module (MMM) logs. To do so, follow these steps:

-

Open the MMM log file (

spring-boot-logger.json.log). By default, in self-managed, on premise deployments, the log file is located in the directory specified through the propertylogging.file.path. Typically, the property points to thelogfolder within the MMM installation folder (/opt/ataccama/one/mmm-backend/log).Keep in mind that the log output (file or console), format (plaintext or JSON), and location might differ from the ones provided here depending on your logging configuration. See Logging Configuration. -

In the log file, look for the messages with one of the following action identifiers:

-

actionId=user-synchronization status=FAILURE -

actionId=user-synchronization-scheduled status=FAILURE

-

-

Check whether the following details are present in the exception:

-

Exception type:

com.ataccama.one.metadata.md.intfc.transaction.ValidationsResultException. -

Constraint type:

UNIQUE.

-

-

If the exception information matches, in the web application (Global Settings > Persons) search for the persons whose identifier is provided in

IdsInViolationin the log.The application should contain only some of these entities as those that break the constraint are rollbacked. These rollbacked entities can be found in the MMM log.

The constraint type indicates the issue that prevented user synchronization: *

constraintId=PERSON_UNIQUE_USERNAME: The newly imported users contain usernames that are in conflict with thepersonwhose identifiers are provided inIdsInViolation. *constraintId=PERSON_UNIQUE_EMAIL: The newly imported users contain emails that are in conflict with thepersonwhose identifiers are provided inIdsInViolation. *constraintId=PERSON_UNIQUE_USER_ID: The newly imported users contain user identifiers that are in conflict with thepersonwhose identifiers are provided inIdsInViolation.

-

- Possible solution

-

The issue can be resolved in one of the following ways. As all of them have some drawbacks, the most suitable solution depends on your needs and particular use case.

-

Select a different unique key for synchronization. This is done through the property

plugin.user-provider.ataccama.one.synchronization-unique-key. If the appropriate unique key is chosen, users are correctly paired and the synchronization issue is avoided.In self-managed deployments, the property

plugin.user-provider.ataccama.one.synchronization-unique-keyis configured in theapplication.propertiesfile located in/opt/ataccama/one/mmm-backend/etcfolder.For more information, see MMM Configuration, section User provider plugin configuration.

-

Delete the user whose information is causing the issue. If you have changed the synchronization unique key and the issue is still present, deleting the affected user should be a safe option. However, if a matching user is reimported later, it is not linked to the deleted user.

-

Change the value for which a conflict is reported so that it is unique. However, this might not be a viable option for practical reasons.

-

Monitoring projects

Project fails

If a monitoring project fails, there are a couple of things you can check in order to find the cause. If no cause is identifiable, contact Ataccama Support team.

-

In the DPM job log, scroll until you see

exceptions. If the job is here, in most instances a description of the error is provided (for example, unable to reach data source, source table structure changed and the fields no longer available). -

If the job is not listed in the DPM log, check the Processing Center to see if there are any errors listed.

If you still cannot locate a reason, try the following:

-

Profile the catalog items that the monitoring projects is using. Take note of any errors if that fails.

-

Double-check the rule mappings in the project to see if they make sense.

-

Check if the monitoring project configuration page flags any errors.

-

Check that a set of connection credentials are configured as default.

-

Check if any mandatory fields are missing or incorrect in the configuration of DQ dimensions used in rules in the project.

Breaking errors are as follows:

-

Missing Name for dimension or result.

-

Missing Order for dimension or result.

-

Missing Color for result.

-

No result in the dimension selected as Main result.

-

Missing Default condition result.

-

Missing Default fallback result.

-

Results for Validity dimension changed from Valid and Invalid to something else, or missing results for Validity dimension.

-

One or more result or dimension Name is not unique.

-

-

Lookups

Uploading a lookup file fails

- Problem

-

When trying to manually upload a lookup file (Lookup Items tab), it fails with the following error:

Upload of <user_file>.lkp failed. - Possible cause

-

To determine what caused the upload issue, open the log file and look for the error message. If the error is

Refused to connect to <URL>, the property that allows the connection from ONE to ONE Object Storage (MinIO) is likely misconfigured or missing. - Possible solution

-

Make sure the MinIO URL is correctly configured in the property

ataccama.one.webserver.content-security.extra-urls.

ataccama.one.webserver.content-security.extra-urls={'img-src':{'<link_to_minio>'}, 'connect-src':{'<link_to_minio>'}}.|

Depending on how your configuration is managed, the property For more information, see ONE Web Application Configuration. |

Profiling jobs failing with missing lookup

If profiling is failing due to missing lookup items, you can recreate the lookups from ONE:

-

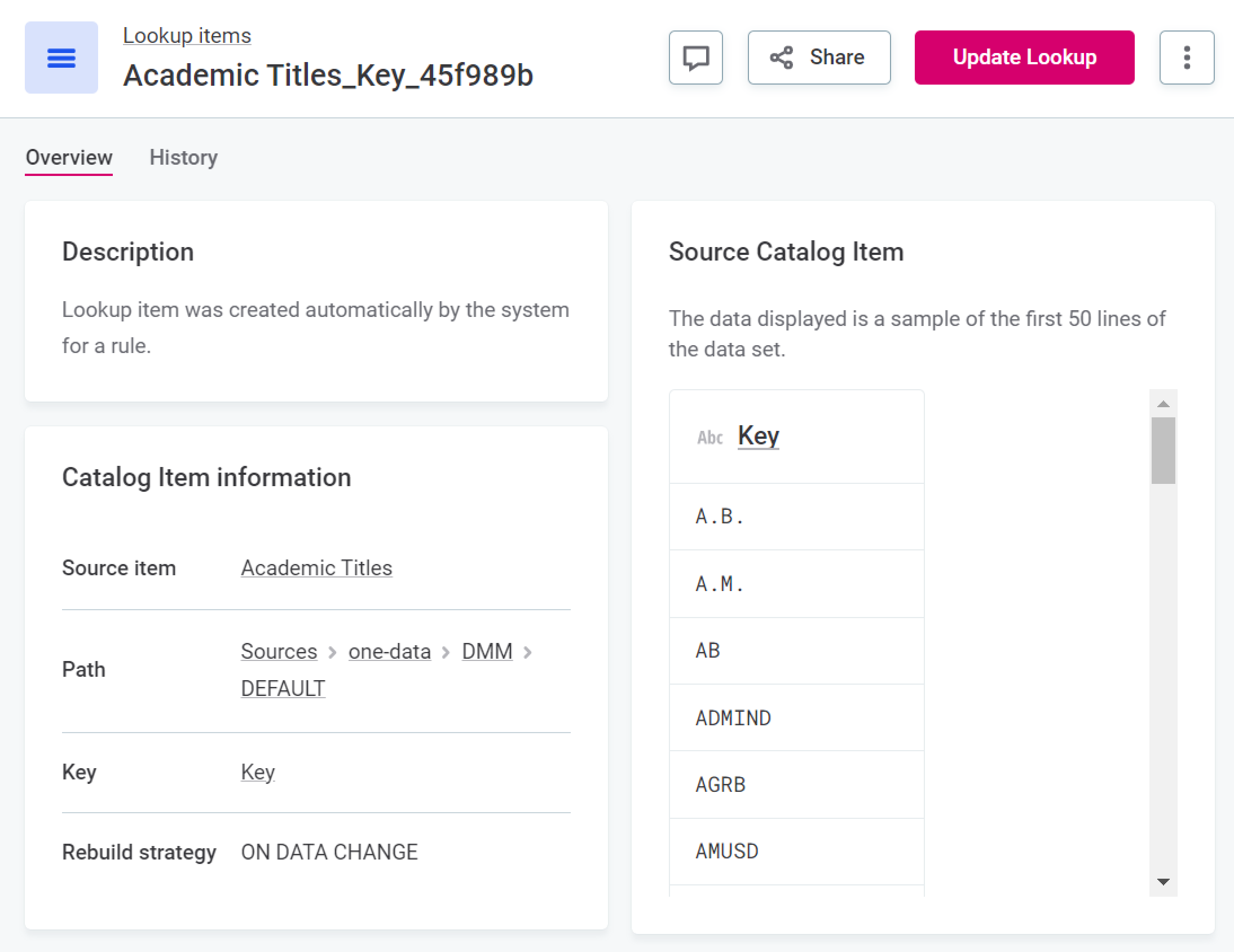

If the original lookup is based on a catalog item, it can be rebuilt from the source catalog item. To do this, select the required lookup item and then Update Lookup.

-

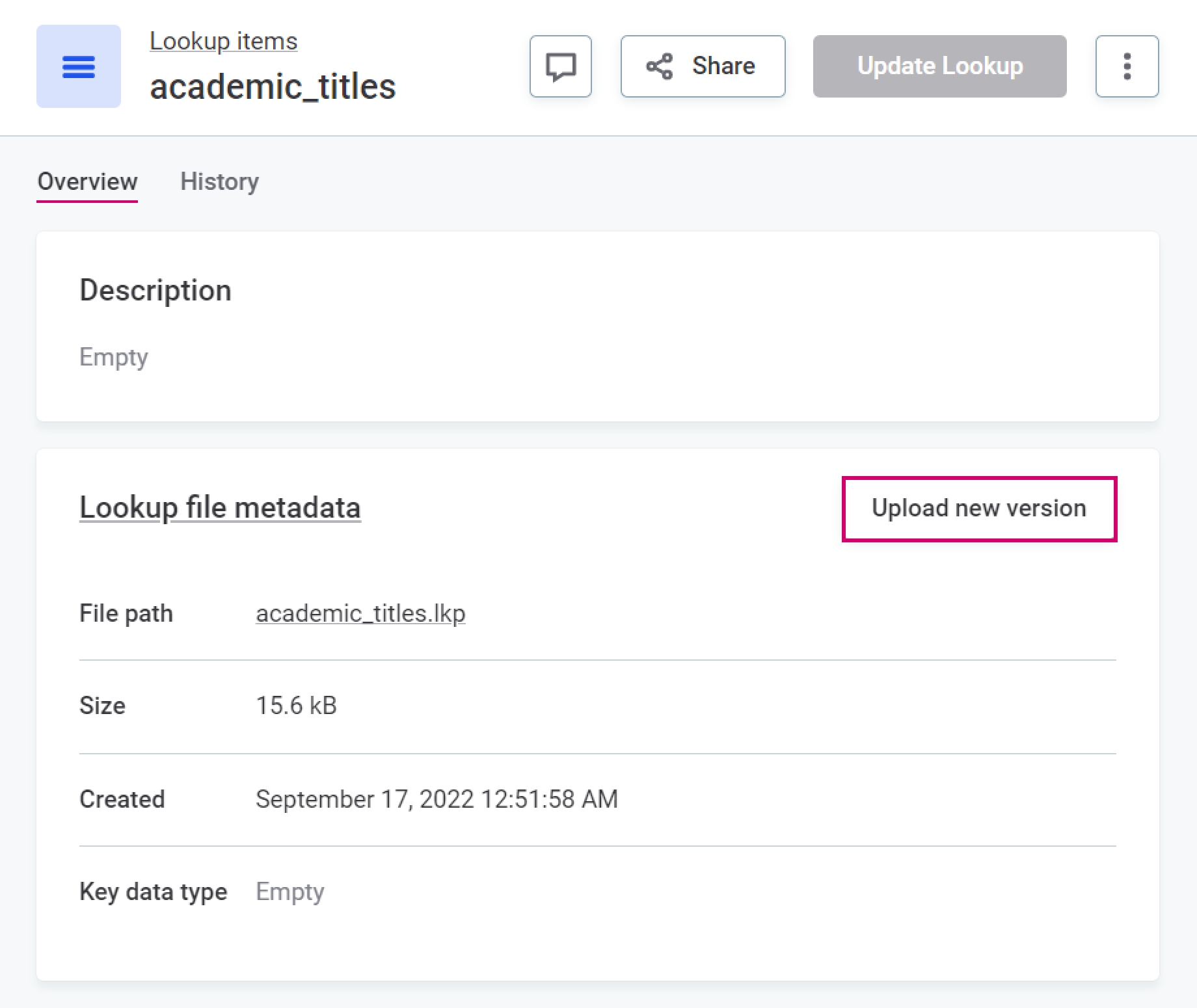

If this option is not available to you, it means the lookup item was not built from a catalog item and must instead be reuploaded. To do this, select the lookup item, and in the Lookup file metadata section, select Upload new version.

Publish changes and retry profiling.

Rule can’t be published

When rule input name contain special or reserved characters, the rule cannot be published due to a clash with the rule expression parser. However, when you are using the Condition Builder, no error is shown.

To avoid this issue, the input names must be enclosed in square brackets, for example:

-

(special character: space)

input 1→[input 1] -

(reserved words)

in→[in]

| To check if a word or character clashes with the expression parser, switch the rule configuration to Advanced Expression where errors are correctly displayed. |

Reserved words are: in, is, and, not, or, xor, div.

|

Search

Full-text search not working due to the 'Socket closed' error

- Problem

-

Full-text search is broken for some users. Instead of search results, you see a

Full-text Search is experiencing problemserror and after refreshing aSocket closederror message. - Cause

-

Ataccama ONE communicates with the web browser using WebSocket. If your proxy removes headers from server responses, the WebSocket communication cannot be established between the browser and the servers where the Ataccama ONE is installed. Consequently, the servers cannot push notifications to the client browser, causing issues such as non-functional search.

WebSocket is used for all functionalities that are updated in real time while you’re on the page. This includes (but is not limited to):

-

Search status.

-

Processing Center notifications.

-

Notifications about the progress of monitoring projects, term suggestions, and documentation flow (that is, the

Running documentation processmessage at the top of a source page). -

Notification about another user changing the application mode (for example, to update schema).

-

Some options that use a toggle switch in the application - for example, the Show only attributes with Rules switch on the catalog item Data Quality tab.

To confirm that the issue is with the WebSocket communication, open the developer tools of your browser and look for the

Error during WebSocket handshake: 'Connection' header is missingerror message. You can find it in the following places:-

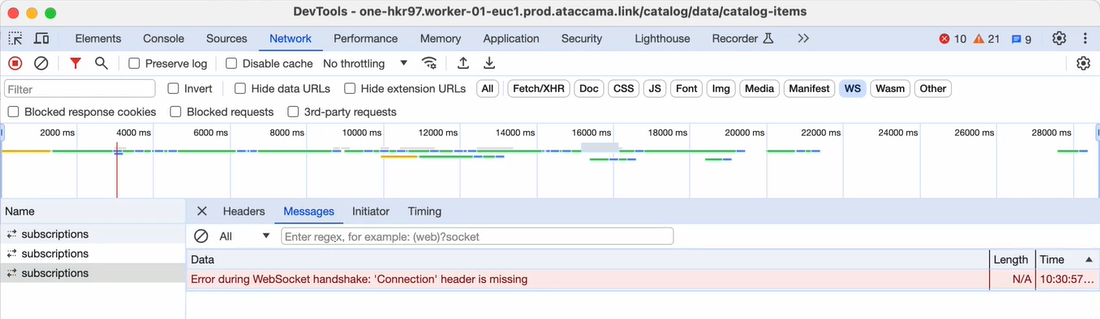

Network tab > WS > subscriptions > Messages:

-

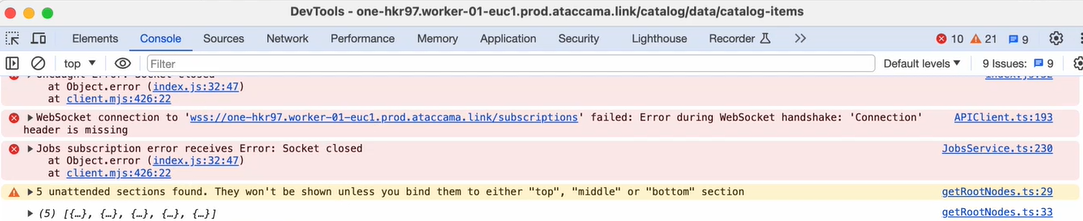

Console tab:

-

- Solution

-

To solve the issue, your network administrator must allowlist the

Connectionheader for network communication for the platform domain (for example,<customer_domain>), subdomain (for example,one.<customer_domain>), or link, with the latter being more strict than the previous.

SMTP

- Problem

-

When trying to send mail, it fails with the following error:

SMTPSendFailedException: 530 Authentication required. - Solution

-

Confirm you have passed the parameters

-Dmail.smtp.auth=true,-Dmail.smtp.starttls.enable=true, and-Dmail.smtp.ssl.protocols=TLSv1.2to the relevant JVM.The Sendmail step can be run on a DQIT server, a ONE Runtime server, or as a DPE job. If running as a DPE job, the parameters must be set in

plugin.executor-launch-model.ataccama.one.launch-type-properties.LOCAL.env.JAVA_OPTS.For more information, see Executor configuration in DPE Configuration.

Connectivity issues

- Problem

-

When trying to connect to your data source, it fails with either an

unknown hostortimeouterror. The cause might be in ONE, or on the infrastructure or network level. For example, DNS, VPN, firewall, or IP address allowlisting. - Possible solution

-

Use command line tools to determine if ONE is the cause of the problem. Depending on your platform, you can use either

curl,telnet,openssl, ornc, for example:-

curl -v telnet://<hostname>:<port> -

curl -v www.googleapis.com -

telnet <hostname> <port> -

openssl s_client -connect <hostname>:<port> -

nc <hostname> <port>

-

The curl command also displays some useful SSL handshake information, including the Subject and Issuer fields of the server SSL certificate.

|

Data sources

For help troubleshooting data source connectivity issues, see the Troubleshooting section in Data Sources Configuration.

Was this page useful?